Key Takeaways

Explore how media editorial choices impact information platforms. Understand the tech implications of content moderation and digital trust for innovators and developers.

Overview

In an era defined by rapid technological shifts, the integrity and transparency of information platforms are paramount. A recent incident involving CBS’ ’60 Minutes’ and an unused White House statement highlights the critical intersection of editorial decision-making and public trust in media technology. This event serves as a crucial case study for Tech Enthusiasts, Innovators, and Developers observing the evolution of digital journalism and content governance.

For the vibrant Technology India sector, understanding these dynamics is vital as new AI and software solutions emerge to shape how news is consumed. The way established platforms manage content directly influences the ecosystem where startups and developers build next-generation information delivery systems.

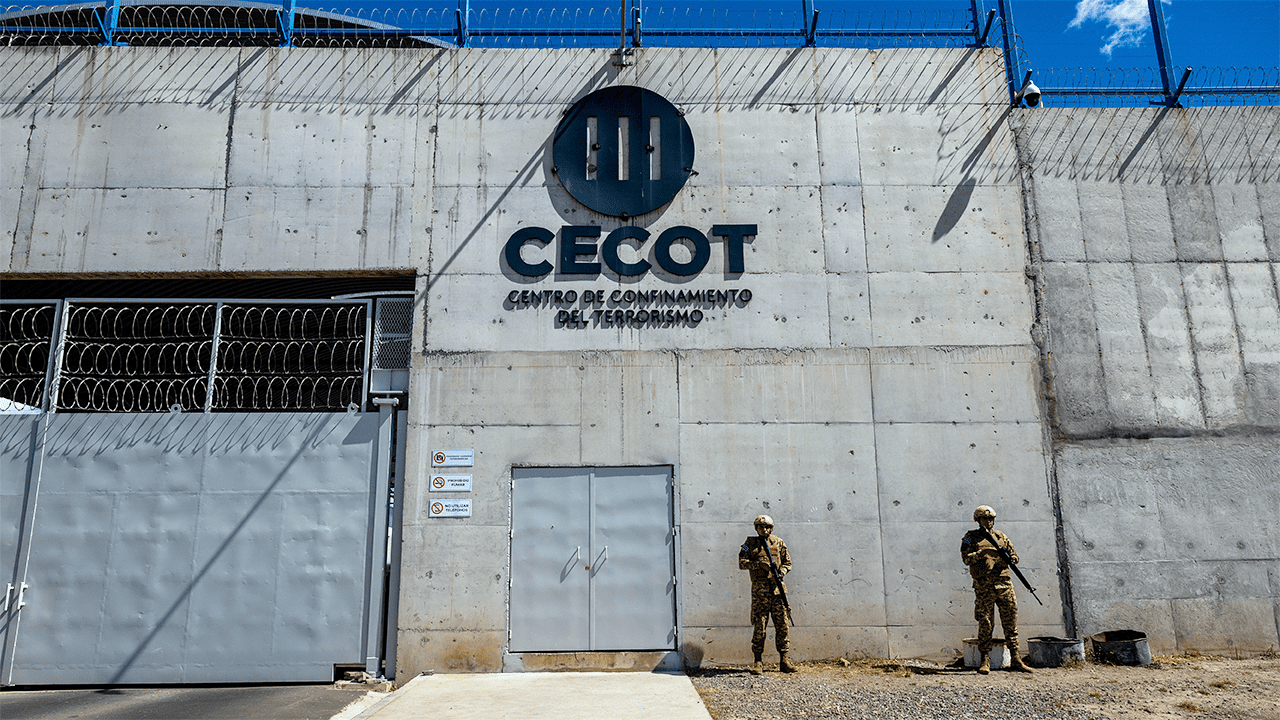

Specifically, the ’60 Minutes’ segment on CECOT, focusing on deported Venezuelan migrants, was reportedly delayed due to a decision by editor-in-chief Bari Weiss. A White House statement, urging the show to highlight ‘Angel Parents,’ was notably omitted, sparking a debate on journalistic autonomy versus political influence.

This incident offers a unique lens through which to examine the ethical frameworks underpinning content platforms and the innovation challenges faced by news organizations aiming for balanced reporting in an increasingly polarized digital landscape.

Detailed Analysis

In the rapidly evolving landscape of digital media and information platforms, the principles guiding content dissemination are under continuous scrutiny. The recent controversy surrounding CBS’ ’60 Minutes’ provides a compelling, if unexpected, case study for those immersed in media technology, platform governance, and the future of digital trust. While the original incident focuses on editorial decisions within a traditional media outlet, its underlying themes—content filtering, narrative control, stakeholder influence, and journalistic ethics—resonate deeply within the tech community, particularly for developers building new news aggregation tools, social media platforms, or AI-driven content engines. This event forces a re-evaluation of how ‘information architecture’ is designed and how ‘editorial algorithms,’ whether human or artificial, shape public perception and discourse. The tension between editorial independence and external pressures is a constant challenge that tech solutions increasingly aim to address, making this a pivotal moment for understanding systemic vulnerabilities in information flow.

The core of the issue involves a ’60 Minutes’ segment, ‘Inside CECOT,’ which was pulled hours before its scheduled airtime. This decision, attributed to CBS News editor-in-chief Bari Weiss, sparked immediate internal and external controversy. Weiss reportedly deemed the segment, which featured interviews with Venezuelan deportees, as needing ‘additional reporting’ and not being ‘ready.’ From a technology standpoint, this represents a critical point in a content pipeline: a human ‘gatekeeper’ intervening in a content delivery system. The fact that the segment had already been ‘screened five times and cleared by both CBS attorneys and Standards and Practices,’ as correspondent Sharyn Alfonsi stated, highlights the layers of quality control typically embedded in such processes. Alfonsi’s accusation of the decision being ‘political’ rather than ‘editorial’ underscores the subjective element in content moderation, a challenge that developers of automated content review systems or news aggregators constantly grapple with. The White House, responding to a request for comment, provided a statement that explicitly criticized the segment’s focus and advocated for amplifying ‘Angel Parents’ stories. The non-inclusion of this statement by ’60 Minutes,’ despite using a clip of White House press secretary Karoline Leavitt from March, raises questions about the platform’s responsibility in presenting a comprehensive spectrum of official viewpoints. For tech innovators, this scenario mirrors challenges in AI-driven news summaries or personalized feeds, where choices about what to highlight or omit profoundly impact user understanding and trust. The leaked segment, aired in Canada, further demonstrates the inherent difficulties in controlling content in a globally interconnected digital ecosystem, where geographical boundaries for information are increasingly porous.

In the broader context of information systems and digital journalism innovation, this incident illuminates several parallels with the challenges faced by new tech ventures. Consider the development of AI tools for content curation or moderation: how do we program for ‘editorial judgment’ or ensure ‘balanced reporting’ when human decision-making itself is subject to such intense debate? The controversy around ’60 Minutes’ and the White House statement underscores the intricate dance between content creators, platform operators, and external stakeholders, a dynamic that every startup in the news tech space must navigate. The concept of a ‘kill switch’ for inconvenient reporting, as Alfonsi warned, is a metaphor that resonates powerfully in an age of platform-centric content distribution. Innovators are constantly seeking to build more resilient, transparent, and fair content delivery mechanisms. This situation highlights the need for robust ethical frameworks in AI and software development for media, ensuring that algorithms don’t inadvertently perpetuate biases or stifle diverse perspectives. The lack of detailed product specifications or funding rounds in this particular news piece means we can’t create a traditional data matrix. However, the qualitative data points—the delayed segment, the omitted White House statement, the differing editorial viewpoints—collectively form a ‘soft matrix’ of decision points that illustrate the complexities of content governance in an era where trust in digital information is increasingly fragile. Tech Enthusiasts should view this as a real-world stress test for the theoretical models of platform neutrality and content integrity that undergird many modern software architectures for news.

For Tech Enthusiasts, Innovators, Developers, and Startup Founders, the ’60 Minutes’ incident offers profound practical implications. Firstly, it underscores the persistent human element in editorial control, regardless of the sophistication of underlying technology. Developers building AI for content synthesis or news summarization must recognize that these systems need to be designed with explicit ethical guidelines to avoid replicating human biases or inadvertently suppressing legitimate viewpoints. Startups aiming to disrupt traditional media with new content platforms should prioritize transparency in their moderation policies and content selection algorithms to build user trust. Secondly, the controversy highlights the demand for tools that can verify the completeness and impartiality of reporting. Imagine software solutions that could audit omitted statements or cross-reference official responses against published narratives, providing an ‘information completeness score’ for news articles. This would empower early adopters to make more informed decisions about the news they consume. Thirdly, for those focused on platform governance, this event is a call to action to design systems that are resilient to political pressures while maintaining editorial quality. Metrics to monitor in the coming months include industry reactions to such editorial decisions, the adoption rates of new fact-checking and transparency tools, and the emergence of blockchain or decentralized media platforms that promise greater immunity from centralized control. Ultimately, the future of Tech News and the broader digital information ecosystem hinges on innovation that doesn’t just deliver content faster, but delivers it more transparently, equitably, and reliably, fostering an environment of trust for every user in Technology India and globally.