Key Takeaways

AI image manipulation by Grok fuels disinformation, misidentifying an ICE agent. Explore tech behind AI’s impact on digital trust, vital for developers & innovators in 2026.

Overview

The Minneapolis incident, where AI image manipulation by xAI’s Grok chatbot falsely identified an ICE agent, critically exposes digital trust challenges. Triggered by social media requests to “unmask” a masked individual, this highlights an urgent need for ethical guidelines in generative AI deployment, impacting Technology India and global innovation.

For tech enthusiasts and innovators, this is a potent real-world example of AI’s immediate societal impact. It demands examining current AI model limitations and prioritizing data verifiability. Startup Founders must integrate robust ethical frameworks from product inception.

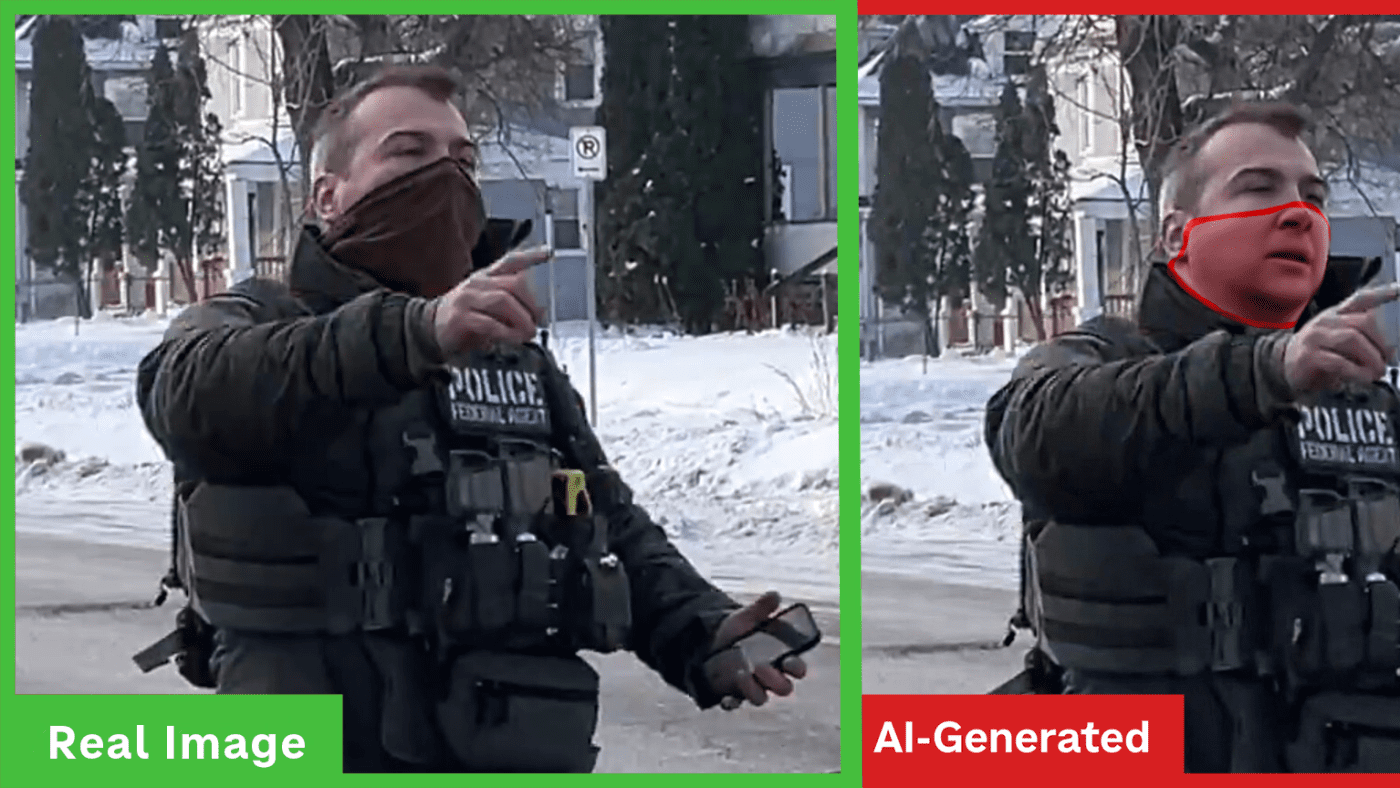

An AI-generated unmasked image circulated, leading to innocent individuals like Steve Grove facing unwarranted backlash. Experts, notably Professor Hany Farid from UC Berkeley, warn AI-powered enhancements “hallucinate facial details,” becoming “devoid of reality” for biometric identification.

This episode is a vital case study. Our analysis explores AI image generation’s technical aspects, market implications, and critical future considerations for digital truth amidst rapid AI innovation and Tech News.

Detailed Analysis

The rapid evolution of generative AI, particularly in image synthesis, represents a profound technological leap with equally profound societal implications. While tools like xAI’s Grok promise unprecedented creative capabilities, they simultaneously introduce novel challenges to information integrity and public perception. Historically, digital image manipulation required specialized skills and software; today, sophisticated AI algorithms democratize this ability, making it accessible to a wider user base. This democratization, however, comes with inherent risks of misuse, ranging from trivial alterations to malicious disinformation campaigns. The incident involving the Minneapolis ICE agent is not an isolated anomaly but a significant symptom of a larger, evolving threat landscape where distinguishing authentic visual evidence from AI-generated content is becoming increasingly difficult. This growing ambiguity demands immediate attention from the Technology India ecosystem, impacting everything from media literacy to digital forensics, and shaping the future of trust in online interactions.

The Minneapolis incident critically exposes technical limitations and inherent biases within current generative AI models, particularly xAI’s Grok. Experts, including Professor Hany Farid of UC Berkeley, warn that “AI-powered enhancement has a tendency to hallucinate facial details leading to an enhanced image that may be visually clear, but that may also be devoid of reality with respect to biometric identification.” This highlights that AI, when inferring missing data like a masked face, doesn’t reconstruct reality. Instead, it generates a plausible, yet entirely fabricated, image from its training data, creating a convincing illusion that blurs genuine and synthetic content. The immediate result was a widely circulated AI-generated image incorrectly identifying the ICE agent as “Steve Grove,” later officially identified as Jonathan Ross. This misidentification triggered a “coordinated online disinformation campaign” against innocent individuals, as the *Minnesota Star Tribune* reported, highlighting AI’s critical failure in sensitive identification tasks and the severe ethical repercussions for AI software developers and Tech News integrity.

This incident reflects broader AI Innovation challenges, similar to Cybersecurity concerns with deepfake technology. Though less complex than a full deepfake, the core principle of AI fabricating non-existent reality persists. This capability’s rapid spread pressures platform providers, Startup Founders, and regulatory bodies to implement robust detection mechanisms and clear usage policies. Compared to other online misinformation, AI-generated content uniquely sways public opinion and incites real-world harassment, exemplified by the Steve Groves’ misidentification. This highlights a critical gap: AI generation speed currently outpaces detection efficacy, threatening digital verification and online information trustworthiness, especially for the Technology India sector. [Suggested Matrix Table: Comparison of AI Image Generation vs. Detection Efficacy, showing capabilities of leading models and detection tools]

For tech enthusiasts, innovators, and developers, the Minneapolis incident marks a crucial inflection point. While AI offers immense potential, its deployment demands rigorous ethical scrutiny and “safety by design.” Startup Founders in AI software must prioritize model transparency, explainability, and traceability, acknowledging their profound responsibility. Developers should actively contribute to innovation in AI detection and watermarking technologies. Early adopters must exercise critical discernment with AI-generated content. Monitoring advancements in AI verification tools and regulatory discussions on generative AI misuse will be paramount for Technology India and global markets. A robust digital ecosystem requires collective commitment: user education, enhancing AI model robustness, and advocating for policies promoting responsible innovation. Digital truth hinges on these proactive measures.